We found that because AI is so powerful, it can differentiate many obscure biological signals that cannot be detected by standard human evaluation

Kun-Hsing Yu

Analyzing several major pathology AI models designed to diagnose cancer, the researchers found unequal performance in detecting and differentiating cancers across populations based on patients’ self-reported gender, race, and age. They identified several possible explanations for this demographic bias.

FAIR-Path Framework Helps Reduce Bias in Medical AI

The team then developed a framework called FAIR-Path that helped reduce bias in the models. “Reading demographics from a pathology slide is thought of as a ‘mission impossible’ for a human pathologist, so the bias in pathology AI was a surprise to us,” said senior author Kun-Hsing Yu, associate professor of biomedical informatics in the Blavatnik Institute at HMS and HMS assistant professor of pathology at Brigham and Women’s Hospital.

Identifying and counteracting AI bias in medicine is critical because it can affect diagnostic accuracy, as well as patient outcomes, Yu said. FAIR-Path’s success indicates that researchers can improve the fairness of AI models for cancer pathology, and perhaps other AI models in medicine, with minimal effort.

Study Reveals Bias in AI Models for Cancer Diagnosis

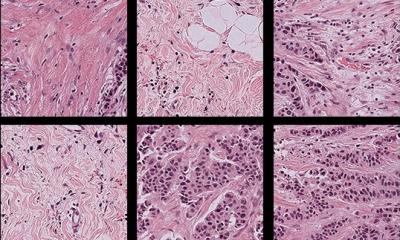

Yu and his team investigated bias in four standard AI pathology models being developed for cancer evaluation. These deep-learning models were trained on sets of annotated pathology slides, from which they “learned” biological patterns that enable them to analyze new slides and offer diagnoses. The researchers fed the AI models a large, multi-institutional repository of pathology slides spanning 20 cancer types.

Diagnostic Accuracy Varied Across Demographic Groups

They discovered that all four models had biased performances, providing less accurate diagnoses for patients in specific groups based on self-reported race, gender, and age. For example, the models struggled to differentiate lung cancer subtypes in African American and male patients, and breast cancer subtypes in younger patients. The models also had trouble detecting breast, renal, thyroid, and stomach cancer in certain demographic groups. These performance disparities occurred in around 29% of the diagnostic tasks that the models conducted. This diagnostic inaccuracy, Yu said, happens because these models extract demographic information from the slides — and rely on demographic-specific patterns to make a diagnosis.

The results were unexpected “because we would expect pathology evaluation to be objective,” Yu added. “When evaluating images, we don’t necessarily need to know a patient’s demographics to make a diagnosis.”

The team wondered

Why didn’t pathology AI show the same objectivity? The researchers landed on three explanations. Because it is easier to get samples for patients in certain demographic groups, the AI models are trained on unequal sample sizes. As a result, the models have a harder time making an accurate diagnosis in samples that aren’t well-represented in the training set, such as those from minority groups based on race, age, or gender.

Yet “the problem turned out to be much deeper than that,” Yu said. The researchers noticed that sometimes the models performed worse in one demographic group, even when the sample sizes were comparable. Additional analyses revealed that this may be because of differential disease incidence: Some cancers are more common in certain groups, so the models become better at making a diagnosis in those groups. As a result, the models may have difficulty diagnosing cancers in populations where they aren’t as common.